Deep Dive: Agentic AI in Financial Crime Fighting

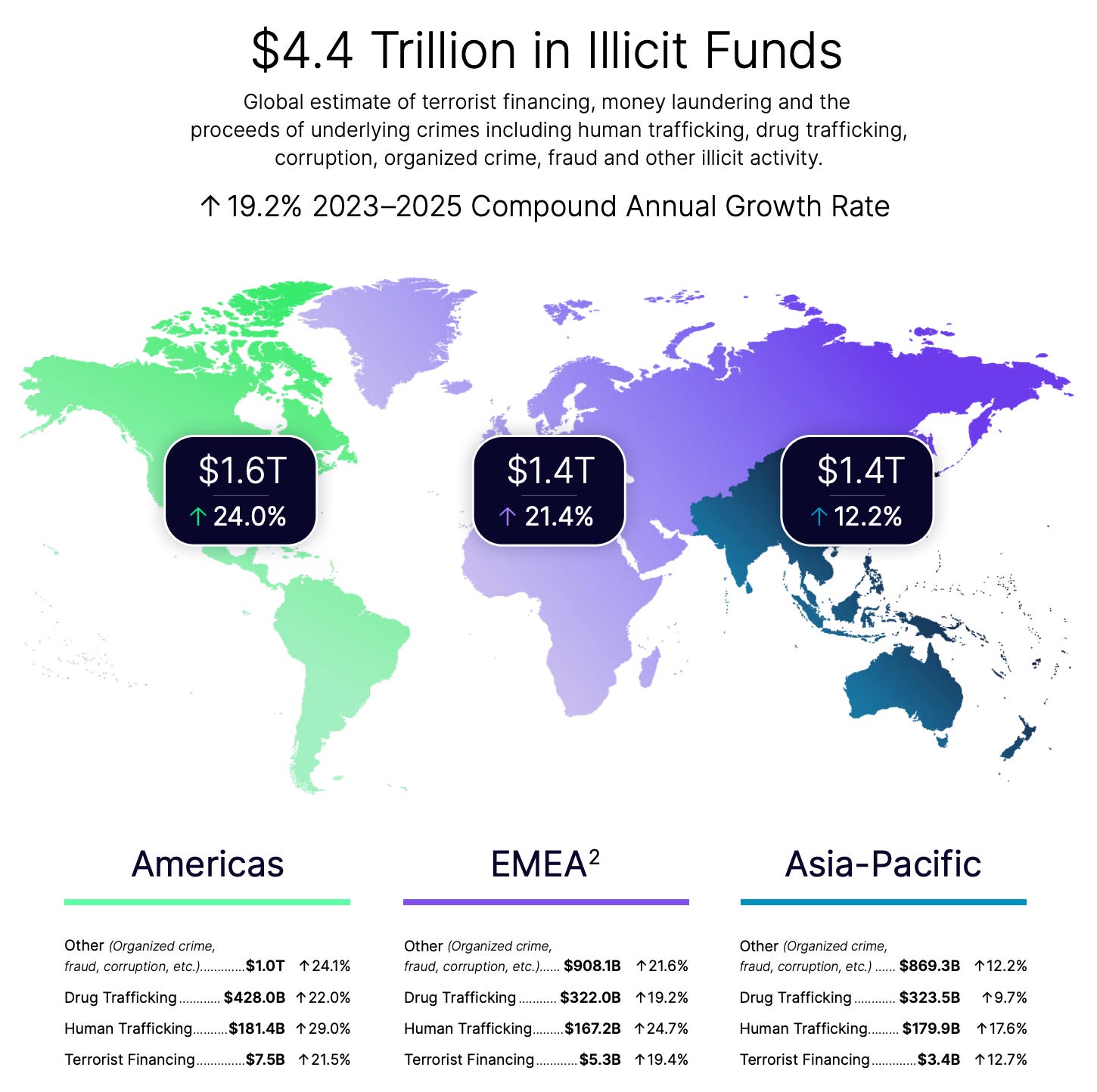

The global financial system is facing a structural crisis. In 2025, illicit financial activity surged to an estimated $4.4 trillion, a figure that represents a $1.3 trillion increase over just two years. This 19.2% compound annual growth rate indicates that financial crime is not merely increasing; it is industrializing. This surge means legacy compliance models are failing. Traditional rule-based systems and manual human review are mathematically incapable of keeping pace with adversarial AI.

The industry currently operates in a state of high-cost inefficiency. Banks commonly allocate 10% to 15% of their total headcount to Know Your Customer (KYC) and Anti-Money Laundering (AML) activities, yet they detect only about 2% of global financial crime flows. This delta between operational spend and effectiveness is the “compliance trap.” I believe agentic AI is the only credible exit strategy for this trap.

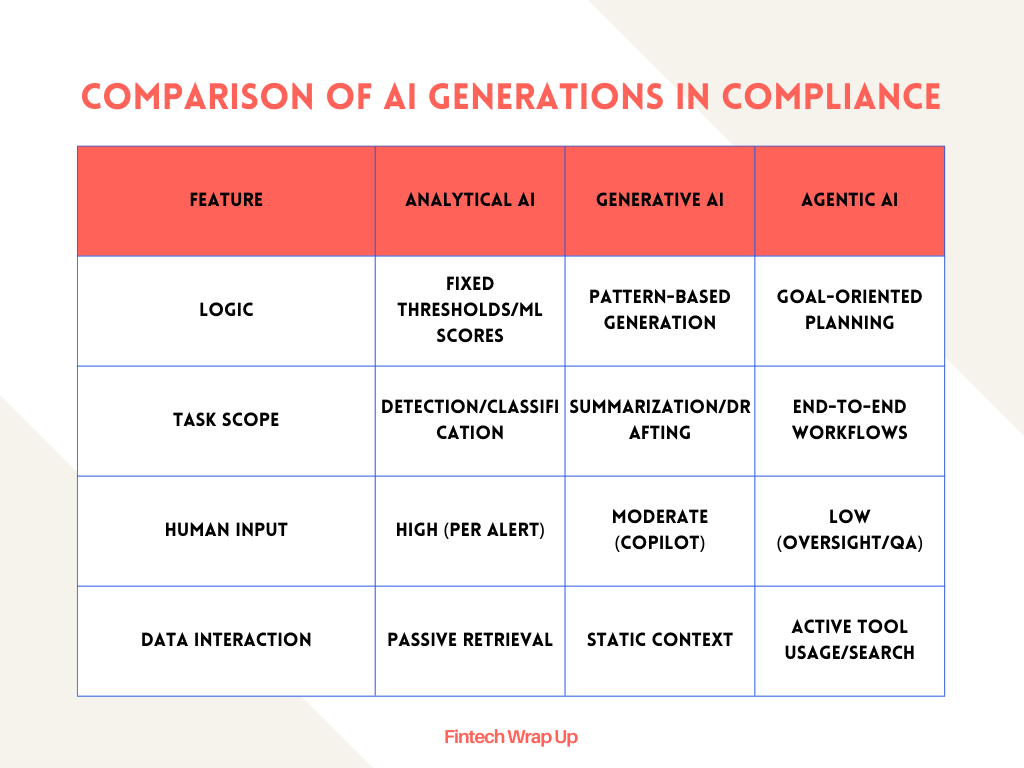

Agentic AI represents a shift from “assistive” technology to “autonomous” execution. While generative AI (GenAI) summarizes data and analytical AI identifies patterns, agentic AI has the capacity to plan, execute, and adapt sequences of actions to reach a specific objective. It is the difference between a chatbot that writes a summary and a digital worker that investigates a case.

The $4.4 trillion market of illicit activity

The scale of global financial crime in 2026 is staggering. Criminal networks now operate with the coordination and scale of multinational corporations. Fraud scams are growing at 19.3%, nearly twice the rate of traditional bank fraud. This growth is powered by AI-driven attacks that overwhelm static defenses.

Global Financial Crime Landscape 2025-2026

90% of financial crime professionals report a measurable increase in AI-driven attacks. These attacks use automation to create synthetic identities, execute deepfake-driven scams, and coordinate cross-channel fraud campaigns. I view the current state of financial crime as a “scamdemic” that necessitates a move from reactive policing to predictive defense.

The obsolescence of no-code and rule-based frameworks

For a decade, “no-code” was the benchmark for risk operations. It allowed compliance teams to build rules without engineering support. However, as crime volumes escalated, the analyst became the bottleneck. In traditional AML, up to 95% of alerts are false positives. Building a single Suspicious Activity Report (SAR) can take four or more days.

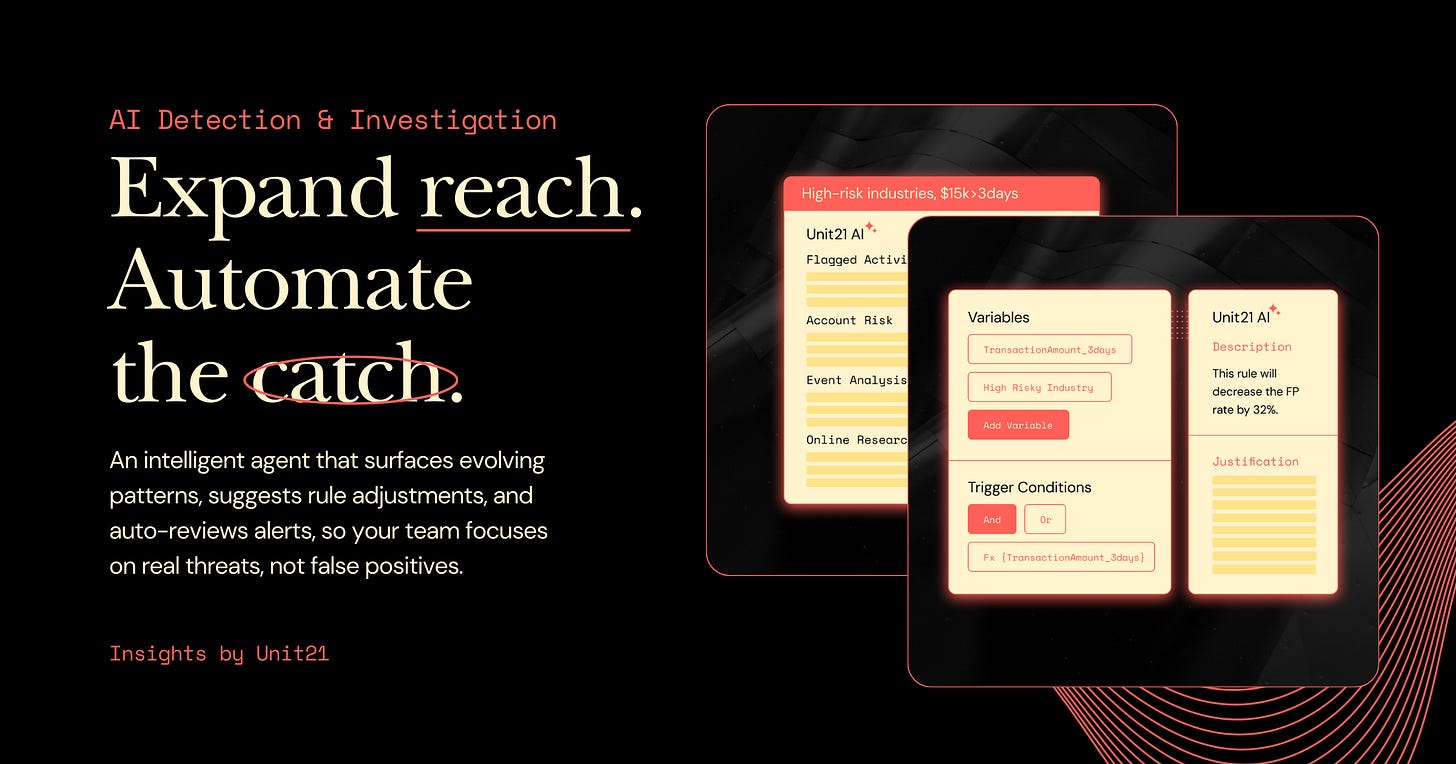

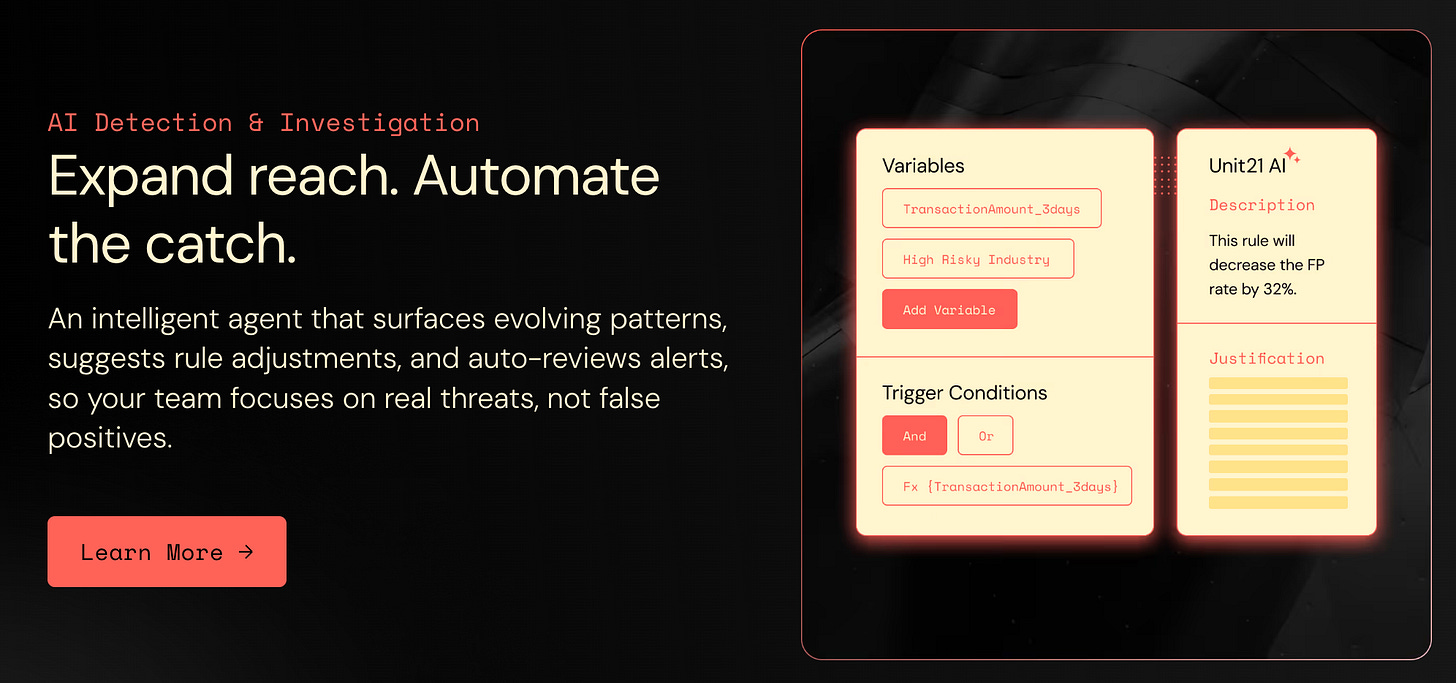

No-code tooling is no longer sufficient. The requirement is now for AI Risk Infrastructure. This infrastructure executes the full financial crime lifecycle: detecting risk in real time, investigating alerts end-to-end, and producing regulator-ready filings. Unit21’s 2026 relaunch signals this transition. Their platform moved from being a no-code rules engine to an agentic system where AI agents tune detection logic and conduct investigations without human analysts driving every step.

Defining agentic AI in risk operations

Agentic AI refers to systems that act with a degree of autonomy toward defined goals. In financial crime fighting, this means the AI can decide which data sources to query, how to interpret inconsistent information, and when to escalate a case.

Comparison of AI Generations in Compliance

The productivity potential of agentic AI is a 20-fold increase over manual practitioners. I categorize these agents into squads that mirror human roles along the value chain. Retrieval-Augmented Generation (RAG) agents handle data extraction from profit-and-loss statements and beneficial ownership documents. Data pipeline agents orchestrate ETL processes and perform entity resolution across fragmented datasets. Research agents monitor market trends and counterparty patterns, while validation agents review agent outputs to ensure quality.

Context engineering vs prompt engineering

The hardest engineering challenge in agentic AI is not writing better prompts; it is context engineering. To produce an auditable investigation narrative, the model must receive the exact right evidence without overloading its context window. LLMs are based on transformer architecture, where every token attends to every other token, resulting in n2 relationships. This leads to attention scarcity as context length increases.

Effective context engineering is the science of curating high-signal tokens to maximize the likelihood of a desired outcome. For example, Unit21 leverages their rich dataset from 7 years of human reviews to work out the optimal context required to complete given tasks. These tasks are then evaluated against historical human investigations, completed by high performing analysts, to ensure correctness, consistency, and effectiveness.

Evaluation is performed using “LLM-as-a-judge” architectures. A secondary, more capable model evaluates the quality of the primary agent’s output, creating a self-checking layer that flags inconsistencies before they reach a human reviewer. This is supplemented by citation validation, where the system verifies that agent claims are grounded in retrieved data rather than model inference.

The AI investigation workflow

When an alert enters the queue, the AI Investigation Agent follows a structured workflow rather than starting from a blank page.

Signal gathering: The agent retrieves the transaction history, entity profile, risk scores, and watchlist matches. It navigates across disparate screens to assemble the context a senior analyst would require.

Workflow orchestration: The agent follows modular steps configured to the institution’s standard operating procedures (SOPs). This includes checking prior alert history, running OSINT searches, and cross-referencing sanctions lists.

Findings assembly: The agent produces a structured package containing a written narrative, evidence logs, and a recommended disposition. The reasoning is explicit and traceable.

The “human-in-the-loop” model remains the default for final dispositions. Analysts approve, modify, or override the agent’s package, ensuring human accountability.

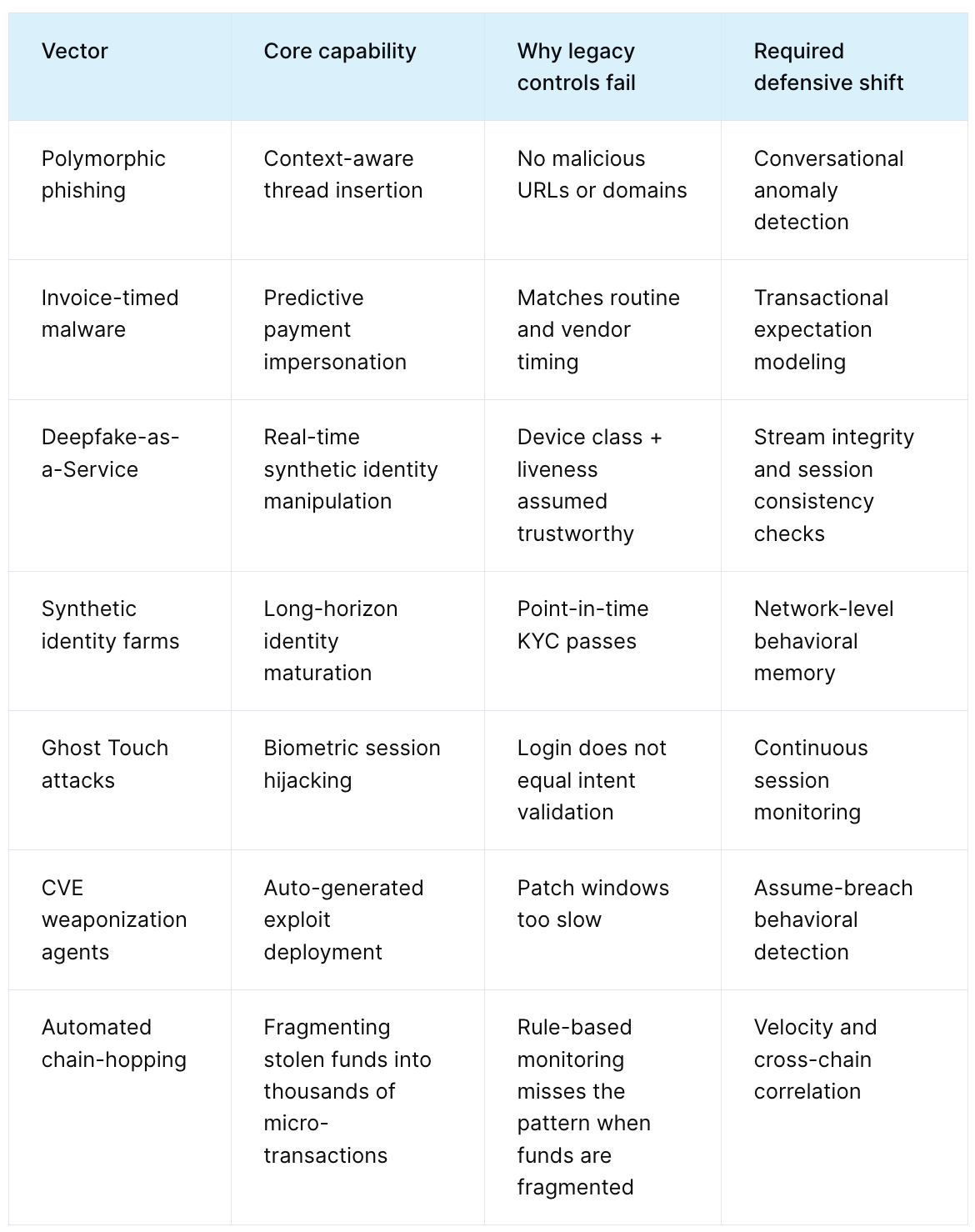

The rise of agentic attacks

Financial crime is accelerating because the attackers are already agentic. In 2026, we are watching seven specific AI-driven fraud vectors and agentic attacks.

1. Polymorphic phishing agents

These agents do not send mass emails. They embed themselves in compromised inboxes, analyze historical threads, and insert themselves into high-trust conversations. They mirror the victim’s typing rhythms and working hours to bypass reputation-based security systems.

2. Invoice-timed malware

Malware families like Predator map business relationships and invoice schedules. They send counterfeit invoices a day before the legitimate ones are expected, targeting operational psychology where speed often overrides scrutiny.

3. Deepfake-as-a-Service (DFaaS)

Attackers use real-time APIs for voice cloning and video manipulation to bypass biometric liveness checks. Deepfake fraud jumped by 1100% in the US and 3400% in Canada between 2025 and 2026.

4. Synthetic identity farms

AI agents manage synthetic profiles, programmatically building credit history over 18 months. These identities remain dormant until a coordinated “activation event” triggers them to drain balances across multiple institutions simultaneously.

5. Ghost touch attacks

Remote Access Trojans combined with electromagnetic interference (EMI) hardware allow attackers to inject touch inputs and install malware without physical interaction.

6. Automated CVE weaponization

Agents ingest public vulnerability disclosures and generate functional exploit code at machine speed, attacking systems before they can be patched.

7. Automated chain-hopping

Laundering agents orchestrate cross-chain strategies, fragmenting stolen funds into tens of thousands of micro-transactions under $10. This makes the economic cost of manual tracing higher than the value of the assets.

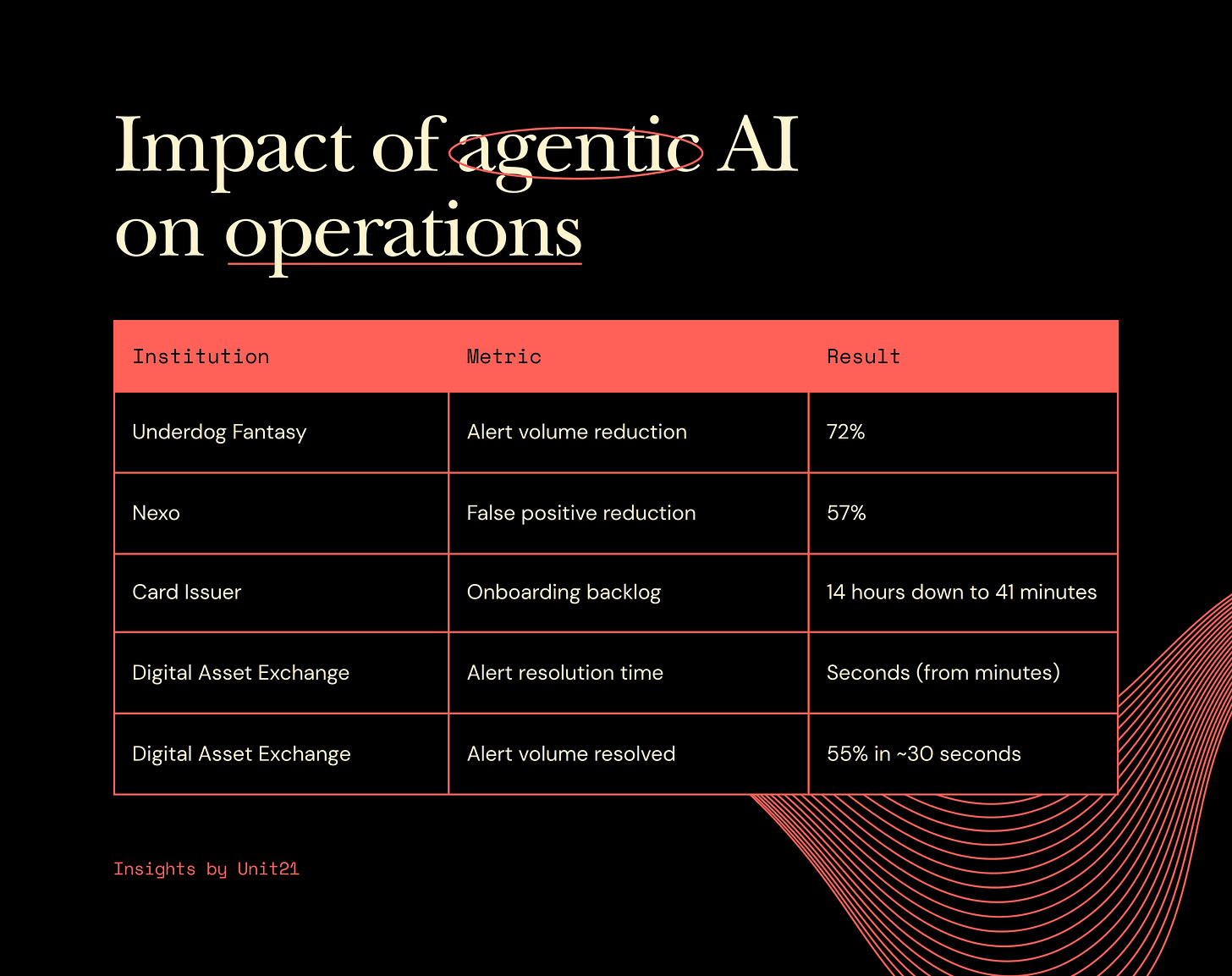

Operational gains and case studies

The results of agentic AI deployment are measurable and significant. For example, according to Unit21, Underdog Fantasy reduced its alert volume by 72% using agentic AI, and Nexo reduced false positives by 57% and is targeting 80% after deploying Unit21’s AI agents.

Impact of Agentic AI on Operations

In transaction monitoring, agentic AI reduces noise by ranking cases by expected risk and using investigator copilots to summarize context. Feedback loops from analyst decisions allow the models to adapt as typologies change. I find that AI Rule Recommendations are critical here, as they analyze patterns across historical dispositions to suggest threshold adjustments that teams “didn’t know they needed”.

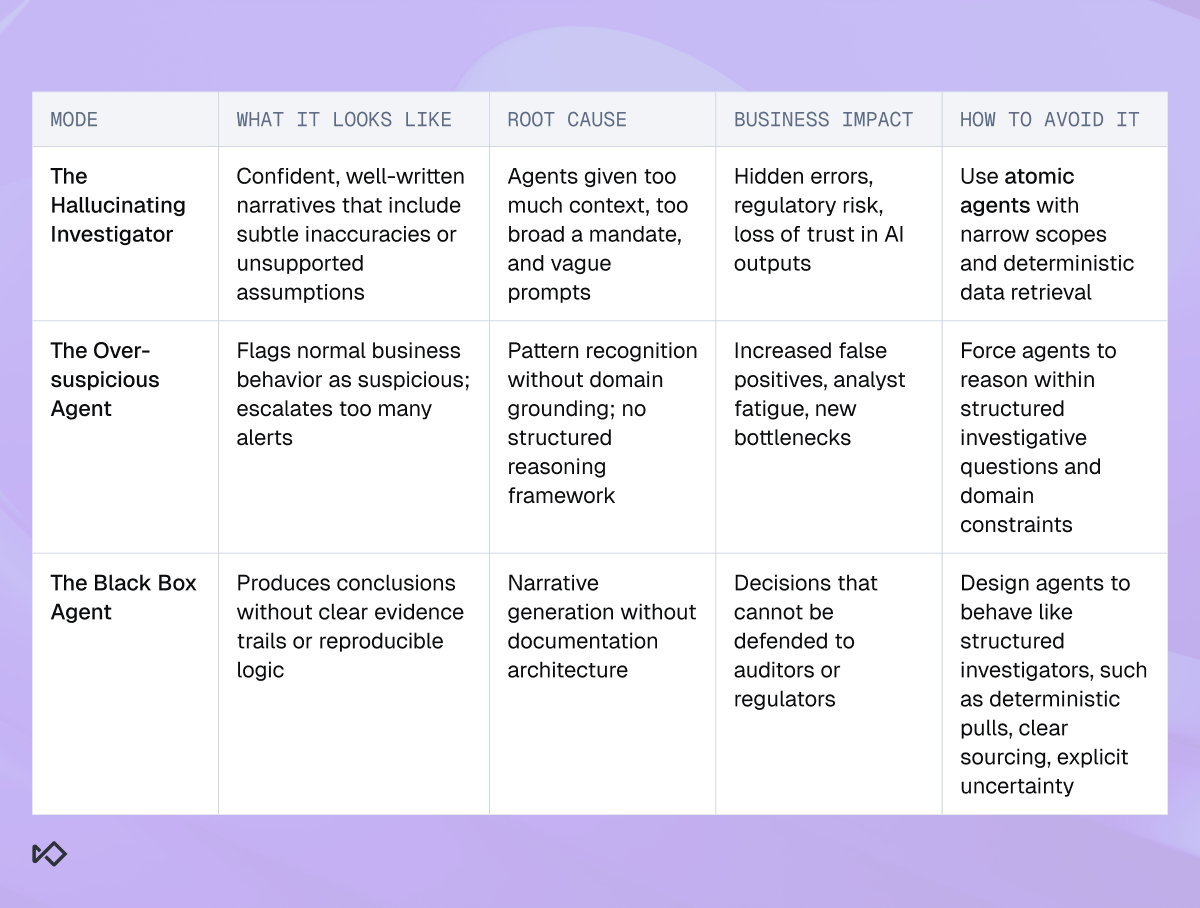

The three failure modes of AI agents

Most early deployments of AI agents fail because of poor guardrails rather than weak models.

The hallucinating investigator: This happens when teams provide too much context and open-ended prompts. In adversarial environments, the model fills data gaps with plausible but incorrect narratives. The solution is to use “atomic agents” with narrow decision boundaries.

The over-suspicious agent: Pattern-driven training without contextual grounding leads to over-escalation. For example, flagging high-value payments between related internal accounts as “layering”. Grounding questions must be injected into the agent’s logic to prevent default conclusions of fraud.

The black box agent: Producing conclusions that are not defensible to regulators. Accurate outputs without a chain of evidence are a liability. Agents must pull data deterministically and focus on structured documentation.

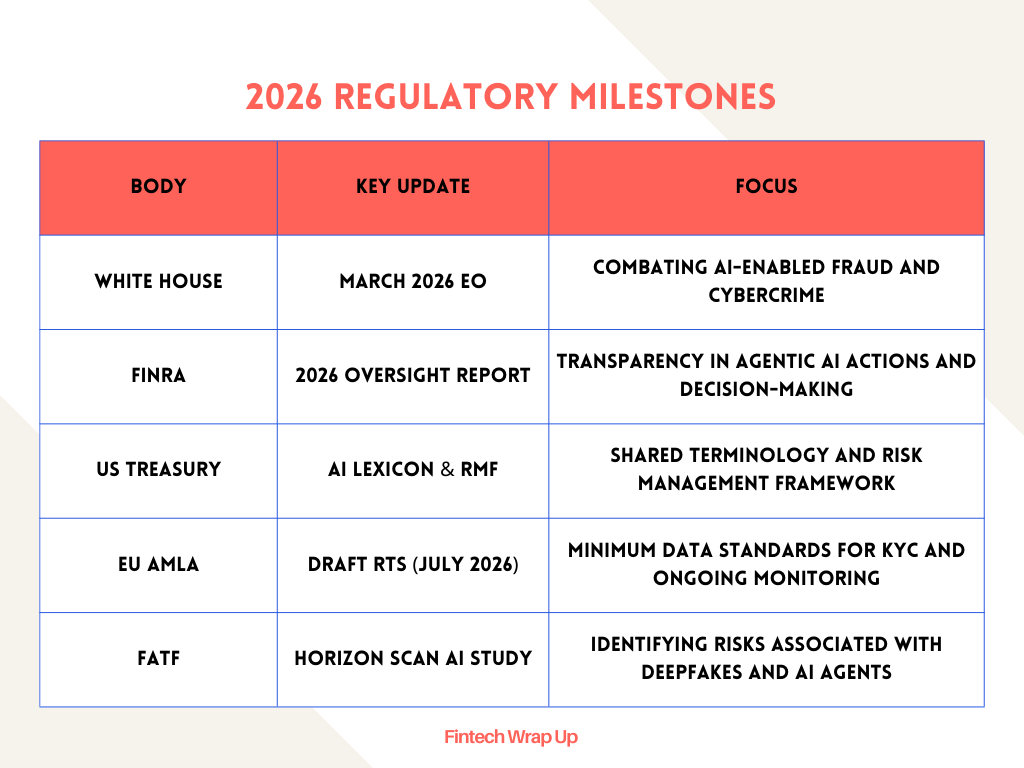

Regulatory environment in 2026

The regulatory stance on agentic AI is shifting from skepticism to expectation. On March 6, 2026, the White House released its National Cyber Strategy and an Executive Order focused on “Combating Cybercrime, Fraud, and Predatory Schemes”. This strategy encourages the use of AI-powered cyber-defense to better protect government networks and scale network defense.

FINRA’s 2026 oversight report includes a dedicated AI section, emphasizing that firms must be able to explain how AI is used and how outputs are tested. Agentic AI raises the stakes because these systems take actions, not just generate content. Transparency into assumptions and accountability for outcomes are now non-negotiable.

2026 Regulatory Milestones

The Financial Action Task Force (FATF) has approved new strategic publications on cyber-enabled fraud and virtual asset risks, encouraging supervisors to leverage innovative technology to return stolen funds to victims. In Europe, banks are moving from static, periodic reviews to “continuous customer understanding” under the scrutiny of the new AML watchdog, AMLA.

Network-level defense

The broader intelligence layer in agentic AI comes from the consortium model. Anonymized, aggregated signal across a customer base allows for the identification of emerging fraud patterns before any single institution has seen enough volume to detect them independently. This network effect allows agentic systems to suggest logic that counters threats across the entire financial ecosystem.

I believe that fragmented systems for onboarding, monitoring, and payment screening create gaps that criminals exploit. A unified screening suite that applies the same logic consistently across the full customer lifecycle is essential. Transparency and explainability build trust and ensure decisions remain aligned with both internal policies and external regulations.

Predictive defense and digital workers

As we move through 2026, the distinction between private stablecoins and public digital money is becoming a critical strategic consideration. The fusion of fraud and AML operations is not just operational convergence; it is a deeper integration of the technology stack.

Agentic AI systems are moving from the pilot phase to the core of AML defense. We are seeing a shift from simple pattern recognition to predictive systems that anticipate criminal activity before a transaction is even flagged. I’ll be blunt: legacy rule-based systems cannot keep up with the speed of instant payments.

The path to impact is driven by speed of adoption and a tailored operating model. Leading institutions are starting with pilot perimeters to prove impact before preparing for a full-scale rollout. Agentic AI is the next major innovation lever for KYC/AML, offering stronger compliance and a more streamlined customer experience.

I treat the adoption of agentic AI as a necessity for survival in the modern financial landscape. The $4.4 trillion in illicit activity is a reminder that the cost of inaction is too high. We must move from a workforce of manual executors to one of AI supervisors, managing a digital factory of agents that detect and investigate at machine speed.

Sources:

Nasdaq® Verafin Report Finds the Financial Crime Epidemic Reaching Alarming New Heights as Illicit Financial Activity Surges to $4.4 Trillion in 2025, accessed March 15, 2026, https://ir.nasdaq.com/news-releases/news-release-details/nasdaqr-verafin-report-finds-financial-crime-epidemic-reaching

2026 Global Financial Crime Report - Nasdaq Verafin, accessed March 15, 2026, https://verafin.com/nasdaq-verafin-global-financial-crime-report/

NEWS: Financial crime activity reaches $4.4 trillion per year, growing nearly 20% annually, accessed March 15, 2026, https://www.amlintelligence.com/2026/03/news-financial-crime-activity-reaches-4-4-trillion-per-year-growing-nearly-20-annually/

how-agentic-ai-can-change-the-way-banks-fight-financial-crime.pdf

AI for Financial Crime: Real-Time Threats, Zero Lag - Sardine, accessed March 15, 2026, https://www.sardine.ai/blog/ai-for-financial-crime

Unit21 Relaunches as the Leader in AI Risk Infrastructure - Business Wire, accessed March 15, 2026, https://www.businesswire.com/news/home/20260310768649/en/Unit21-Relaunches-as-the-Leader-in-AI-Risk-Infrastructure

AI Agents for AML and Fraud: Why Unit21 Rebuilt Everything, accessed March 15, 2026, https://www.unit21.ai/blog/the-new-unit21-why-we-rebuilt-everything-around-ai-agents

AI-powered fraud: 5 trends financial institutions need to understand in 2026, accessed March 15, 2026, https://www.thomsonreuters.com/en-us/posts/corporates/ai-powered-fraud-5-trends/

The Future of AML Compliance: Strategic Predictions for 2026 - Feedzai, accessed March 15, 2026, https://www.feedzai.com/blog/future-aml-compliance-predictions/

Unit21 Named to the 2026 RegTech100 List for Agentic AI Innovation - Blog, accessed March 15, 2026, https://www.unit21.ai/blog/unit21-named-to-the-2026-regtech100-list-for-agentic-ai-innovation

Unit21: Agentic AI Platform for Fraud & AML Operations, accessed March 15, 2026, https://www.unit21.ai/

How AI Is Improving AML Software in 2026 | Alessa, accessed March 15, 2026, https://alessa.com/blog/how-ai-is-improving-aml-software-in-2026/

Unit21 Custom AI Agents: Next-Gen Fraud and AML Tools - Blog ..., accessed March 15, 2026, https://www.unit21.ai/blog/how-custom-ai-agents-are-transforming-fraud-and-aml-operations

How to Move Off Legacy Transaction Monitoring with AI | Unit21 - Blog, accessed March 15, 2026, https://www.unit21.ai/blog/ai-powered-transaction-monitoring-replacing-legacy-black-box-systems

Effective context engineering for AI agents - Anthropic, accessed March 15, 2026, https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

Sardine’s blog, accessed March 15, 2026, https://www.sardine.ai/blog

AI-driven fraud vectors: 7 agentic attacks now live in 2026 - Sardine, accessed March 15, 2026, https://www.sardine.ai/blog/agentic-attacks

Synthetic Identity Document Fraud Surges 300% in the U.S. ..., accessed March 15, 2026, https://sumsub.com/newsroom/synthetic-identity-document-fraud-surges-300-in-the-u-s-sumsub-warns-e-commerce-healthtech-and-fintech-at-risk/

The 3 Failure Modes of Agentic AI in Financial Crime (and How to ..., accessed March 15, 2026, https://www.sardine.ai/blog/agentic-ai-financial-crime-failure-modes

White House’s New Cyber Strategy and Executive Order Seek to Deter Adversaries and Strengthen Resilience | Crowell & Moring LLP, accessed March 15, 2026, https://www.crowell.com/en/insights/client-alerts/white-houses-new-cyber-strategy-and-executive-order-seek-to-deter-adversaries-and-strengthen-resilience

New National Cyber Strategy and EO Lays Out a Path for Combating Cybercrime and Promoting Innovation - Wiley Rein, accessed March 15, 2026, https://www.wiley.law/alert-New-National-Cyber-Strategy-and-EO-Lays-Out-a-Path-for-Combating-Cybercrime-and-Promoting-Innovation

FINRA 2026 AI Governance: Managing Agentic and Shadow AI Risks - Smarsh, accessed March 15, 2026, https://www.smarsh.com/blog/thought-leadership/finra-2026-oversight-priorities-ai-communications-fraud/

March 2026 Regulatory Update: A $68M Fair Lending Settlement and More - Ncontracts, accessed March 15, 2026, https://www.ncontracts.com/nsight-blog/march-2026-regulatory-update

Horizon Scan AI and Deepfakes - FATF, accessed March 15, 2026, https://www.fatf-gafi.org/en/publications/Methodsandtrends/horizon-scan-ai-deepfake.html

KYC Regulatory Trends for 2026: FATF, FCA & EBA Focus - smartKYC, accessed March 15, 2026, https://smartkyc.com/kyc-regulatory-trends-to-watch-in-2026/

FATF plenary February 2026: Key grey list changes, new strategic publications, and a new presidential appointment - ComplyAdvantage, accessed March 15, 2026, https://complyadvantage.com/insights/fatf-plenary-february-2026-key-updates/

Rethinking Watchlist Screening Solutions for 2026 | Unit21 - Blog, accessed March 15, 2026, https://www.unit21.ai/blog/from-noise-to-precision-watchlist-screening-solutions-for-2026

INSIGHT: Agentic AI and stablecoins – the five trends redefining AML in 2026, accessed March 15, 2026, https://www.amlintelligence.com/2026/01/insight-agentic-ai-and-stablecoins-the-five-trends-redefining-aml-in-2026/

Disclaimer:

Fintech Wrap Up aggregates publicly available information for informational purposes only. Portions of the content may be reproduced verbatim from the original source, and full credit is provided with a “Source: [Name]” attribution. All copyrights and trademarks remain the property of their respective owners. Fintech Wrap Up does not guarantee the accuracy, completeness, or reliability of the aggregated content; these are the responsibility of the original source providers. Links to the original sources may not always be included. For questions or concerns, please contact us at sam.boboev@fintechwrapup.com.

Hi Sam! A timely breakdown. Have been following the space as well. The opportunity is to build compliance and predictive tools that are trained on firm data to automatically comply while at the same the AI system can understand where risk lies in real time to ensure it alerts. AML compliance is one of the interesting areas.

I write a blog on LegalTech analyzing startups and identifying opportunities for building. Would love to get your thoughts!

https://harshithviswanath.substack.com/p/three-legaltech-whitespace-plays?r=4y4gfu